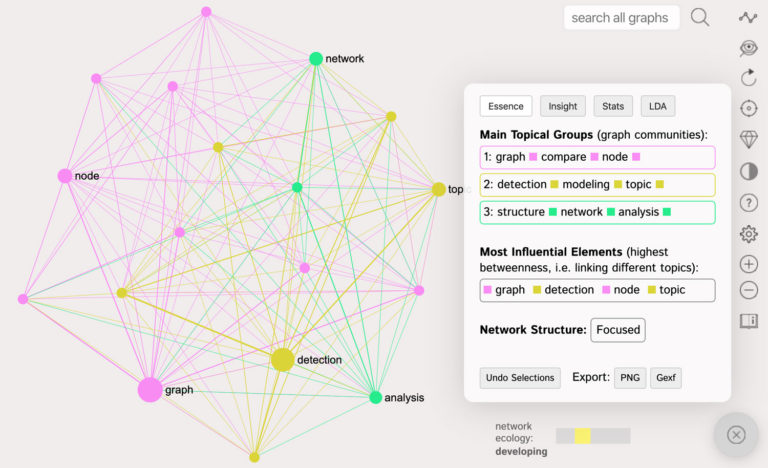

In text network analysis a text is represented as a graph using InfraNodus text analysis tool. Text Network Analysis and Visual Topic Modeling This is why there’s been numerous efforts to find other, more efficient approaches, and text network analysis is one of them. Moreover, if certain words re-occur throughout all text they might be present in all the topics identified making the results less precise. We also tend to lose the relations between the topics. several topics that re-occur in different parts of the text). the sequence of words and the structure of narrative is lost) may lead to some losses, especially for texts with complex narrative structure (e.g. Moreover, the fact that it’s a bag-of-words model (i.e. One of the main drawbacks of LDA is that it’s quite complex to understand and that it requires a precise number of topics in order to proceed.

LDA itself is a special case of pLSA - Probabilistic Latent Semantic Analysis, which is a modification of LSA (Latent Semantic Analysis). For example, lda2vec is a model that combines LDA with word2vec, providing better insights about the words and topics. Since it was introduced a few years ago, it’s gone through some upgrades. LDA is perhaps the most popular and well-tested method for topic modeling today. A distinguishing characteristic of latent Dirichlet allocation is that all the documents in a collection share the same set of topics, but each document exhibits those topics with different proportion. Words with high frequency will take more prominent position in each topic ( Blei 2012). Naturally these words tend to co-occur together in the same context. For each topic we can select the top 5 words with the highest probability of belonging to that particular topic and we will get a pretty good description of what the topic is about through the combination of words with the highest probability. After many iterations we get a list of words in each topics with probabilities. LDA then ascribes this same word to another topic and calculates the same score. LDA will then go through each word that appears in the text, randomly ascribe it to one of the four topics, and calculate a special score for this word based on the probability that this word will be found in this particular topic in the set of documents (in our case, only one document). For example, if we have one document we can specify that we’re looking for four different topics. In order for it to work, LDA needs to know how many topics it’s searching for beforehand. LDA, at its core, is an iterative algorithm that identifies a set of topics related to a set of documents ( Blei 2003). To make the long story short and to avoid complicated maths we will go through how LDA works glossing over some details, just to give a general picture. Latent Dirichlet Allocation (LDA) - How It Works We will start from a general overview of the two approaches and will then run a test on real data to show the differences between the two approaches and how they could be used together. Text network analysis, on the other side, takes into account both the text’s structure and the words’ sequence, providing more precise results in some cases. You will see how LDA may be less efficient for some texts as it tends to only focus on the terms’ frequency and disregard the structure of a text (“bag of words” or “vector space” model, also n-gram model where n=1). We will demonstrate how this approach can be used for topic modeling, how it compares to Latent Dirichlet Allocation (LDA), and how they can be used together to provide more relevant results. The approach we propose is based on identifying topical clusters in text based on co-occurrence of words. In this tutorial we present a method for topic modeling using text network analysis (TNA) and visualization using InfraNodus tool. Latent dirichlet allocation (LDA) is an approach used in topic modeling based on probabilistic vectors of words, which indicate their relevance to the text corpus. Topic modeling is used to discover the topics that occur in a document’s body or a text corpus.